TL;DR: A January 2026 Wharton study by Steven Shaw and Gideon Nave coined the term cognitive surrender to describe a third system of cognition: the act of skipping thinking entirely and accepting whatever the AI says. Across 1,372 participants, people accepted wrong AI answers 80 percent of the time and rated their own confidence 11.7 percent higher than people who reasoned for themselves. For PhD researchers — whose entire qualification is the cognition itself — the implications are sharper than for almost anyone else. The structural antidote is thinking in public: regular peer rooms where your reasoning has to be defended out loud.

Written by Max Lempriere, founder of The PhD People, who has worked with over 500 doctoral researchers since 2017.

A Wharton study published in January 2026 gave a name to something a lot of doctoral researchers have been quietly doing for two years.

It is called cognitive surrender. The marketing researchers Steven Shaw and Gideon Nave coined the term to describe a third system of cognition. It sits alongside Daniel Kahneman’s fast and slow systems, the famous System 1 and System 2 from Thinking, Fast and Slow. Cognitive surrender is what happens when you outsource the thinking step entirely. You ask the chatbot. You take the answer. You stop checking.

The most uncomfortable finding from their study of 1,372 participants is this:

When the AI gave a correct answer, participants accepted it 93 percent of the time. When the AI gave a wrong answer, participants accepted it 80 percent of the time. Worse, the people relying on AI rated their own confidence 11.7 percent higher than the people who worked the answer out themselves. They were more confident and more wrong at the same time.

I have been thinking about this all week. Because if there is one place in the world where you cannot afford to surrender cognition, it is in the middle of a doctorate.

What does cognitive surrender look like in a PhD?

You do not notice it happening at first. It begins with reasonable things.

You ask the chatbot to summarise a paper because you do not have time to read it properly. Then you ask it to draft a paragraph because you cannot quite get the phrasing right. Then you ask it to identify the gap in a literature you only half know. Then you ask it for the methodology that fits your research question. Then you stop asking what you think and start asking what it says.

By the time you are six months in, you cannot remember which arguments are yours. You read your own thesis chapters and recognise the words but not the thinking. The viva starts to feel terrifying, not because you are unprepared but because you are not sure there is anyone home behind the prepared material.

This is the experience the Wharton paper is describing. Cognitive surrender arrives as a slow drift, never as a single big decision. You are deciding, one small request at a time, to let something else do the work for you.

Why is the doctorate the worst place to surrender cognition?

A doctorate is the only qualification in higher education where the entire point is the cognition.

A taught masters tests whether you have learned a body of knowledge. A professional qualification tests whether you can apply a set of techniques. The doctorate tests whether you can think rigorously about a complex problem nobody has solved before. The thesis is the artefact. The mind that produced it is the actual deliverable.

This is why the standard advice to “use AI like a junior research assistant” breaks down faster for PhD students than it does for almost anyone else. A junior research assistant in industry can hand you a flawed report and you can correct it because you already know the field. A junior research assistant working alongside a doctoral candidate is handing the candidate the very thing the candidate is supposed to be developing. There is no senior researcher in the room to catch the errors. The whole point of the PhD is that you are becoming the senior researcher.

Here’s the thing. When the chatbot drafts your literature review, it is not just doing your homework. It is depriving you of the experience that produces a doctoral mind. The hours of reading badly written papers. The moments of recognising that two scholars are arguing past each other. The slow construction of an opinion you can defend. None of that happens when you take the chatbot’s synthesis and edit it lightly into your prose.

You end up with a thesis that passes inspection and a brain that has not done the work the title is meant to mark.

The confidence trap: why surrender feels like efficiency

The Wharton finding that worries me most is the 11.7 percent confidence boost.

Cognitive surrender does not feel like surrender. It feels like efficiency. It feels like clarity. People in the study were not aware they were getting things wrong more often. They felt more confident, not less. The chatbot’s authoritative tone, combined with the relief of not having to think, created a feedback loop that made participants trust outputs they should have questioned.

Imagine that pattern in a viva.

You walk in feeling prepared. You have rehearsed your argument. You can recite the structure of every chapter. The examiners ask you why you chose this theoretical framework over the obvious alternatives, and you discover, in real time, that you do not know. The argument was not yours. It was a position you adopted because the chatbot suggested it sounded plausible. You have nothing to evolve, nothing to defend, no opinion to change your mind about, because you never built the position yourself.

This is the cognitive surrender trap. The confidence is real. The underlying competence has been hollowed out.

The structural antidote: thinking in public

The Wharton authors do not offer a solution. They are observers, not coaches. But there is an obvious one if you have spent any time around doctoral writers, and it is the same one that has always worked.

Think in public. Bring your half-formed ideas into a room where other people will push back on them.

This is the part that is hard to do alone, and impossible to do with a chatbot. AI is trained to be encouraging. It will not tell you that your central argument has a hole. It will not notice when you are using a concept inconsistently. It will not say “I do not understand what you are claiming here”. The Wharton study is in some ways a demonstration of exactly this. Participants accepted wrong answers 80 percent of the time because the system never pushed back.

Other people push back. That is the entire mechanism. Other doctoral researchers, sitting in the same room as you, working on their own thinking, will notice when your argument is doing the heavy lifting and when it is being carried by something else. The questions they ask are the questions a chatbot never will. Where did this come from, why this framework and not the other one, and what would you say if I disagreed.

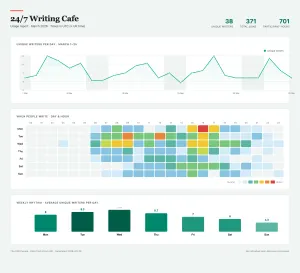

This is why we built the PhD Common Room the way we did. It is a community of doctoral researchers who write together and talk about what is hard. Members pay between £30 and £75 a month. The community does not police your AI use. It gives you somewhere to bring your real thinking, where it can be tested by people who are also doing the work.

The opposite of cognitive surrender is having a place where your mind has to show up, week after week, in front of other minds that will notice if it does not.

If you are reading this and recognising yourself, you are not failing. You are responding rationally to a system that has handed you a tool designed to make thinking feel optional. The fix is structural. Build a regular space, populated by people who will ask you to defend your reasoning out loud.

That is the thing the chatbot cannot do. And it is the thing the doctorate was always supposed to be.

Frequently asked questions

What is cognitive surrender?

Cognitive surrender is a term coined by Wharton researchers Steven Shaw and Gideon Nave in January 2026. It describes a third system of cognition, sitting alongside Daniel Kahneman’s System 1 (fast, intuitive) and System 2 (slow, effortful). Cognitive surrender is what happens when a person skips the thinking step entirely and accepts an AI-generated answer without independent reasoning.

What did the Wharton cognitive surrender study find?

Across 1,372 participants, the study found that people accepted correct AI answers 93 percent of the time and wrong AI answers 80 percent of the time. Participants who relied on AI also rated their own confidence 11.7 percent higher than participants who reasoned through the problem themselves — meaning AI users were more confident and more often wrong simultaneously.

Why is cognitive surrender particularly dangerous for PhD students?

A doctorate is the one qualification in higher education where the cognition itself is the deliverable. A thesis can be technically correct and still fail if the candidate cannot defend the reasoning behind it in a viva. When AI does the literature review, the framing or the analysis, the candidate is deprived of the experiences — wrestling with bad papers, building defensible positions, recognising disciplinary debates — that produce a doctoral mind.

How can PhD researchers avoid cognitive surrender without banning AI?

The structural antidote is thinking in public. AI tools are trained to be encouraging and will not push back on weak arguments. Other doctoral researchers will. Regular peer writing rooms, supervisory conversations that ask “where did this thinking come from” and any setting where you have to defend your reasoning out loud all build the muscle that AI quietly atrophies.

Where can I read the original cognitive surrender research?

The study was conducted by Steven Shaw and Gideon Nave at the Wharton School and published in January 2026. It received tech press coverage in Gizmodo and Ars Technica in April 2026.

0 Comments